This wiki page documents the files created by the DETER containerization system. I've created a simple containerized experiment, then looked at all the files generated, documenting the contents of the files below.

Per Experiment Static Files

The sample experiment is Deter,muppetnet. The NS file and containerization command are:

set ns [new Simulator]

source tb_compat.tcl

set opt(netSpeed) 100Mb

set allNodes "beaker beauregard brewster drbunsenhoneydew floyd lips mousey rizzo pops kermit piggy fozzie drteeth animal janice"

set studNodes "beaker beauregard brewster drbunsenhoneydew kermit piggy fozzie"

set mayhemNodes "floyd lips mousey rizzo pops drteeth animal janice"

foreach node $allNodes {

set $node [$ns node]

}

# link between LANS

set rainbow [$ns duplex-link "fozzie" "janice" 100Mb 0.0ms DropTail]

# two LANs

set studebaker [$ns make-lan $studNodes $opt(netSpeed) 0.0ms]

set electricmayhem [$ns make-lan $mayhemNodes $opt(netSpeed) 0.0ms]

$ns rtproto Static

$ns run

[glawler@users:~/src/nsfiles]$ /share/containers/containerize.py DETER muppetnet ./muppetnet.ns

This creates the directory /proj/Deter/exp/muppetnet which contains a containers directory. This directory has the following files:

-rw-r--r-- 1 glawler Deter 9668 Feb 12 07:25 annotated.xml -rw-r--r-- 1 glawler Deter 142 Feb 12 07:25 assignment -rw-r--r-- 1 glawler Deter 68 Feb 12 07:25 backend_config.yaml drwxr-xr-x 2 glawler Deter 1024 Feb 12 07:25 children -rw-r--r-- 1 glawler Deter 7246 Feb 12 07:26 config.tgz -rw-r--r-- 1 glawler Deter 5660 Feb 12 07:25 converted.xml -rw-r--r-- 1 glawler Deter 2432 Feb 12 07:25 embedding.yaml -rw-r--r-- 1 glawler Deter 662 Feb 12 07:25 experiment.tcl -rw-r--r-- 1 glawler Deter 0 Feb 12 07:25 ghosts -rw-r--r-- 1 glawler Deter 793 Feb 12 07:26 hosts -rw-r--r-- 1 glawler Deter 6 Feb 12 07:26 hostsw.pickle -rw-r--r-- 1 glawler Deter 1315 Feb 12 07:25 maverick_url -rw-r--r-- 1 glawler Deter 1690 Feb 12 07:25 openvz_guest_url -rw-r--r-- 1 glawler Deter 11063 Feb 12 07:25 partitioned.xml -rw-r--r-- 1 glawler Deter 816 Feb 12 07:25 phys_topo.ns -rw-r--r-- 1 glawler Deter 1120 Feb 12 07:25 phys_topo.xml -rw-r--r-- 1 glawler Deter 16 Feb 12 07:25 pid_eid -rw-r--r-- 1 glawler Deter 54 Feb 12 07:25 pnode_types.yaml -rw-r--r-- 1 glawler Deter 10 Feb 12 07:25 qemu_users.yaml drwxr-xr-x 2 glawler Deter 512 Feb 12 07:26 route -rw-r--r-- 1 glawler Deter 205 Feb 12 07:26 shaping.yaml -rw-r--r-- 1 glawler Deter 732 Feb 12 07:25 site.conf drwxr-xr-x 2 glawler Deter 512 Feb 12 07:26 software drwxr-xr-x 2 glawler Deter 512 Feb 12 07:26 switch -rw-r--r-- 1 glawler Deter 708 Feb 12 07:26 switch_extra.yaml -rw-r--r-- 1 glawler Deter 6 Feb 12 07:26 swlocs.pickle -rw-r--r-- 1 glawler Deter 36759 Feb 12 07:26 topo.xml -rw-r--r-- 1 glawler Deter 3136 Feb 12 07:26 traffic_shaping.pickle -rw-r--r-- 1 glawler Deter 66961 Feb 12 07:26 visualization.png -rw-r--r-- 1 glawler Deter 3 Feb 12 07:26 wirefilters.yaml

Notes on these files:

annotated.xml - This is an XML file that describes the nodes and substrates (networks) of the experiment. The node description includes name, interfaces, container type, and node type (pc, etc).

assignment - Shows container assignment to physical node. e.g. node "foo" runs on physical node 1.

backend_config.yaml - "{server: 'boss.isi.deterlab.net:6667', unique_id: fdb56b6d989ff6a4}"

children - Not sure. Is a directory that has files which describe a DAG - relationships between pnodes, openvz, and container nodes. Mostly empty files in this experiment though as the network topology is minimal.

config.tgz - This directory, ./containers tarred and gzipped. Untarred into /var/containers/config}} on the pnode by {{{setup/hv/hv after pnode boot.

converted.xml - the same as annotated.xml minus the container information added.

embedding.yaml - container and pnode relationships in YAML.

experiment.tcl - The NS file which generated the experiment.

ghosts - empty file

hosts - /etc/hosts which includes the container nodes.

hostsw.pickle - ??? (dp0\n. Looks like a pickle file (binary python marhshalling format), but does not "un-pickle" into any data. Hits EOF.

maverick_url - URLs to container images. Default location is http://scratch/benito/.... Used for qemu images, I think.

openvz_guest_url - URLs to openvz images. Default location /share/containers/images/...

partitioned.xml - Another XML file that is used in the partitioning process. See annotated.xml

phys_topo.ns, phys_topo.xml - physical NS file/topology/nodes of experiment.

pid_eid - group and experiment ID of experiment

pnode_types.yaml - The machine types of the pnodes, "pc2133, bpc2133, MicroCloud?", in YAML.

qemu_users.yaml - list of users for qemu nodes? Just contains [glawler] for this experiment, so it's not an exhaustive list...

route - routing info for containers. directory with one file per node, showing route info. e.g. 10.0.0.0/8 10.0.1.7.

shaping.yaml - traffic shaping for container networks.

site.conf - configuration settings for containers: example:

[containers] switch_shaping = true qemu_host_hw = pc2133,bpc2133,MicroCloud openvz_guest_url = %(exec_root)s/images/ubuntu-10.04-x86.tar.gz exec_root = /share/containers qemu_host_os = Ubuntu1204-64-STD openvz_host_os = CentOS6-64-openvz maverick_url = http://scratch/benito/pangolinbz.img.bz2 xmlrpc_server = boss.isi.deterlab.net:3069 url_base = http://www.isi.deterlab.net/ grandstand_port = 4919 backend_server = boss.isi.deterlab.net:6667 openvz_template_dir = %(exec_root)s/images/ attribute_prefix = containers switched_containers = qemu,process

software - empty directory

switch - directory of per switch node (pnodes) files. Each file describes vlan creation, numbering, and switch port/vlan assignment for containers on that node. Does this get fed to the {{{vde_switch}}es on the pnodes?

switch_extra.yaml - YAML file which looks like it contains information about gluing together the VDE switches - extra interfaces (taps), double pipes (attaching switches together I think), and tap pipes (for attaching tap ifaces to the switch)

swlocs.pickle - another python pickle file. But hits EOF before it is decoded. No idea what this is for.

topo.xml - Containers topology in YAML format. Has substrates (networks). For nodes it shows interfaces and addressing (ip/MAC) as well as which vde_switch the node is attached to. Nodes also contain some VM info like disk size, template/image path/name, vhost.

traffic_shaping.pickle - Looks like, oddly, traffic shaping information in the python pickle format:

>>> import pickle

>>> import pprint

>>> fd = open('traffic_shaping.pickle', 'rb')

>>> d = pickle.load(fd)

>>> pprint.pprint(d)

>>> pprint.pprint(d)

{u'animal': {u'10.0.0.1': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.2': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.3': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.4': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.5': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.6': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.8': {'bandwidth': None, 'delay': None, 'loss': None}},

...

u'rizzo': {u'10.0.0.1': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.2': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.3': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.5': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.6': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.7': {'bandwidth': None, 'delay': None, 'loss': None},

u'10.0.0.8': {'bandwidth': None, 'delay': None, 'loss': None}}}

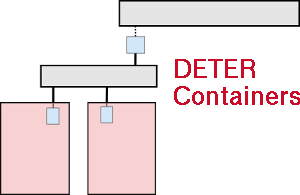

visualization.png - image of the topology of the container networks.

wirefilters.yaml - empty YAML file: {}

Per Experiment Dynamic Files

These files end up in /space/local/containers (softlnked from /var/containers on the physical nodes and in /var/containers on the container nodes. Presumably this directoty gets populated by the experiment startup script invocation sudo /share/containers/setup/hv/bootstrap /proj/Deter/exp/2x4-cont/containers/site.conf.

total 56 drwxr-xr-x 2 root root 4096 Feb 15 08:06 bin drwxr-xr-x 10 glawler root 4096 Feb 15 08:10 config -rw-r--r-- 1 root root 0 Feb 15 08:10 configured -rw-r--r-- 1 root root 9 Feb 15 08:06 eid drwxr-xr-x 2 root root 4096 Feb 15 08:06 etc drwxr-xr-x 2 root root 4096 Feb 15 08:09 images drwxr-xr-x 7 root root 4096 Feb 15 08:06 launch drwxr-xr-x 2 root root 4096 Feb 15 08:10 lib drwxr-xr-x 2 root root 4096 Feb 15 08:10 log drwxr-xr-x 2 root root 4096 Feb 15 08:07 mnt drwxr-xr-x 6 root root 4096 Feb 15 08:06 packages -rw-r--r-- 1 root root 6 Feb 15 08:06 pid drwxr-xr-x 7 root root 4096 Feb 15 08:06 setup drwxr-xr-x 4 root root 4096 Feb 15 08:10 vde drwxr-xr-x 2 root root 4096 Feb 15 08:10 vmchannel

bin/info.py - spits out basic information to stdout:

[(buffynet) glawler@a:/var/containers/bin]$ ./info.py --all NODE=a, PROJECT=Deter, EXPERIMENT=2x4-cont TYPE=qemu NAME=inf000, INET=10.0.0.4, MASK=255.255.255.0, MAC=00:00:00:00:00:04 NAME=control0, INET=172.16.183.35, MASK=255.240.0.0, MAC=00:66:00:00:b7:23 CONTROL=control0, INET=172.16.183.35, MASK=255.240.0.0, MAC=00:66:00:00:b7:23

/var/containers/launch/hv/hv - container startup script. This is invoked after the emulab-configured event, which is triggered after the control network interface is configured. This is where it all starts. *What does HV stand for? "Hypervisor" maybe? This only runs on the pnode I'm assuming.

/etc/rc.local - on the containers mounts the appropriate directories and calls a container-type specific bootstrap script and sets up routes (by calling /var/containers/launch/routes.py /var/containers/config) and calls the start command from the orginal NS file (if it exists).

/share/containers/ Files

Call tree for run time containers. The setup and launch scripts are static. The containerize script (I'm assuming) generates a "children" directory which describes which files should be invoked on which nodes and implictly which order they should fire in. The files in children mixes stage:type:hostname as file names and file contents in a confusing manner. hv stands for "hypervisor".

- setup/hv/bootstrap

- setup/hv/bootstrap_qemu (don't know why this is called.)

- setup/hv/hv

- setup/hv/substrate_ip

- setup/hv/if_mapping

- per experiment setup scripts described by /var/containers/config/children/hv:hv:[hostname] (always pnode hv setup?)

- example: /var/containers/config/children/hv:hv:pnode-0000 ends up calling

- setup/hv/qemu (when there are qemu nodes "a", "c", "g", "f" on that pnode-0000)

- setup/qemu/19_net_config_files.py /var/containers/config a c g f

- setup/qemu/20_vnc_display.py /var/containers/config a c g f

- setup/qemu/30_accounts_to_yaml.py /var/containers/config a c g f

- setup/qemu/35_yaml_to_passwd.py /var/containers/config a c g f

- setup/qemu/40_file_systems.py /var/containers/config a c g f

- setup/qemu/50_root_fs.py /var/containers/config a c g f

- setup/qemu/90_launch_commands.py /var/containers/config a c g f

- setup/hv/qemu (when there are qemu nodes "a", "c", "g", "f" on that pnode-0000)

- example: /var/containers/config/children/hv:hv:pnode-0000 ends up calling

- launch/hv/hv

- launch/embedded_pnode/hv (on embedded pnode only)

- launch/[CHILD]/hv [nodename], where [CHILD] is described above and [nodename] is hostname of the machine

- example: qemu on pnode

- launch/qemu/control_bridge.py

- It then loops forever reading messages and responding. See file for responses. Does lots of work to talk to IRCd on boss, which is not running. It also loops on killed signals from the qemu processes and other messages from itself and the hv.lib.

Documentation for select files from the containers repo is below.

setup/hv/bootstrap - This is the file that gets executed as the DETER start_cmd on the pnodes once the experiment is swapped in. It reads the experiment-specific conf file then sets up the local containers working directory (in /share/containers ). It then installs various packages, both for DETER and platform specific things ("deter_data" and a VDE build). It then runs var/containers/setup/hv/hv to do actual work. This file is described below.

/var/containers/setup/hv/hv - This script is invoked by the start_cmd script on the pnode after the node has booted and before the containers are up. It untars the ${EXP}/containers dir into /var/containers/config and writes the /etc/{init,init.d} containers control/start/stop script. It then reads the children file. It then calls the per-child setup script /setup/hv/* (although I don't see these). Finally it invokes the /etc/init/containers platform script. (On Ubuntu it uses "start containers".) This maps to /var/containers/launch/hv/hv, described below.

/var/containers/launch/hv/hv - The script invoked by ./setup/hv/hv on the pnode. It sets up tap pipes and bridges for connecting VDE switches between pnodes. It creates the vde switches. It then calls /var/containers/launch/%s/hv %s=child name for all existing files. There is one of these files for each type of container supported, embedded_pnode, qemu, openvz, and process. The qemu version is explained below.

/var/containers/launch/qemu/hv - this file is invoked at the end of the launch sequence on a pnode to spawn the qemu containers that will run on that pnode. (The VDE networking is setup before this script is invoked.) Log is written to /var/containers/log/hv_qemu.log. Builds something called "vncboot" (don't know why this is needed). The script then reads the per-experiment YAML config files, mounts the qemu image on the phost (via qemu-nbd), then writes the config information to the qemu disk. This assumes an Ubuntu image, which is bad. There doesn't seem to be any OS/platform checking here at all. (This file writes rc.local to the qemu image.) Then it starts up some IRC-based control channel with a server running on boss. ??? There is no ircd running on boss now, so is this some sort of legacy command/control channel for containers? I also don't yet see where the qemu instances are started.