| Version 8 (modified by , 12 years ago) (diff) |

|---|

Table of Contents

Reference Guide

This document describes the details of the commands and data structures that make up the containers system. The User Guide /Tutotial provides useful context about the workflows and goals of the system that inform these technical details.

Commands

This describes the command line interface to the containers system.

containerize.py

The containerize.py command creates a DETER experiment made up of containers. The containerize.py program is available from /share/containers/containerize.py on users.isi.deterlab.net. A sample invocation is:

$ /share/containers/containerize.py MyProject MyExperiment ~/mytopology.tcl

It will create a new experiment in MyProject called MyExperiment containing the experiment topology in mytopology.tcl. All the topology creation commands supported by DETER are supported by the conatainerization system, but emulab/DETER program agents are not. Emulab/DETER start commands are supported.

Containers will create an experiment in a group if the project parameter is of the form project/group. To start an experiment in the testing group of the DETER project, the first parameter is specified as DETER/testing.

Either an ns2 file or a topdl description is supported. Ns2 descriptions must end with .tcl or .ns. Other files are assumed to be topdl descriptions.

By default, containerize.py program will partition the topology into openvz containers, packed 10 containers per physical computer. If the topology is already partitioned - at least one element has a conatiners::partition atttribute - containerize.py will not partition it. The --force-partition flag causes containerize.py to partition the experiment regardless of the presence of containers:partition attributes.

If container types have been assigned to nodes using the containers:node_type attribute, containerize.py will respect them. Valid container types for the containers:node_type attribute or the --default-container parameter are:

| Parameter | Container |

embedded_pnode | Physical Node |

qemu | Qemu VM |

openvz | Openvz Container |

process | ViewOS process |

The containerize.py command takes several parameters that can change its behavior:

--default-container=kind- Containerize nodes without a container type into kind. If no nodes have been assigned containers, this puts all them into kind containers.

--force-partition- Partition the experiment whether or not it has been paritioned already

--packing=int-

Attempt to put int containers into each physical node. The default

--packingis 10. --config=filename- Read configuration variables from filename the configuration values are discussed below.

--pnode-types=type1[,type2...]- Override the site configuration and request nodes of type1 (or type2 etc.) as host nodes.

--end-node-shaping- Attempt to do end node traffic shaping even in containers connected by VDE switches. This works with qemu nodes, but not process nodes. Topologies that include both openvz nodes and qemu nodes that shape traffic should use this. See the discussion below.

--vde-switch-shaping- Do traffic shaping in VDE switches. Probably the default, but that is controlled in the site configuration. See the discussion below.

--openvz-diskspace- Set the default openvz disk space size. The suffixes G and M stand for gigabytes and megabytes.

--openvz-template- Set the default openvz template. Templates are described in the users guide.

--image- Construct a visualization of the virtual topology and leave it in the experiment directories (default)

--no-image- Do not construct a visualization of the virtual topology and leave it in the experiment directories

--debug- Print additional diagnostics and leave failed DETER experiments on the testbed

--keep-tmp- Do not remove temporary files - for debugging only

This invocation:

$ ./containerize.py --packing 25 --default-container=qemu --force-partition DeterTest faber-packem ~/experiment.xml

takes the topology in ~/experiment.xml (which must be topdl), packs it into 25 qemu containers per physical node, and creates an experiment called DeterTest/faber-packem that can be swapped in. If experiment.xml were already partitioned, it will be re-partitioned. If some nodes in that topology were assigned to openvz nodes already, those nodes will be still be in openvz containers.

The result of a successful containerize.py run is a DETER experiment that can be swapped in.

More detailed examples are available in the tutorial

container_image.py

The container_image.py command draws a picture of the topology of an experiment. This is helpful in keeping track of how virtual nodes are connected. containerize.py calls this internally and stores the output in the per-experiment directory (unless --no-image is used.

A researcher may call container_image.py directly to generate an image later or to generate one with the partitioning drawn.

The simplest way to call container_image.py is:

/share/containers/container_image.py topology.xml output.png

The first parameter is a topdl description, for example the one in the per-experiment directory. The second parameter is the output file for the image. When drawing an experiment that has been containerized, the --experiment option is very useful.

Options include:

--experiment=project/experiment- Draw the experiment in project/experiment, if it exists. Note that this is just DETER experiment and DETER project. Omit any sub-group.

--topo=filename-

Draw the topology in filename

--attr-prefix=prefix Prefix for containers attributes. Deprecated. --partitions- Draw labeled boxes around nodes that share a physical node.

--out=filename- Save the image in filename

If neither --topo nor --experiment is given, the first positional parameter is the topdl topology to draw. If --out is not given the next positional parameter (the first if --topo nor --experiment is given) is the output file.

A common invocation looks like:

/share/containers/container_image.py --experiment SAFER/testbed-containers ~/drawing.png

Configuration Files

Per-experiment Directory

When an experiment is containerized, the data necessary to create it is stored in /proj/}}''project''{{{/exp/experimentcontainers. The

/proj/}}''project''{{{/exp/experiment is created by DETER when the experiment is created, and used by experimenters for a variety of things.

There are a few files in the per-experiment directory that most experimenters can use:

experiment.tcl- If the topology was passed to containerize.py as an ns file, this is a copy of that input file. Useful for seeing what the experimenter asked for, or as a basis for new experiments.

experiment.xml-

The analogue of

experiment.tclis the topology was given as topdl. The topdl input file. visualization.png- A drawing of the virtual topology in png format. Generated by container_image.py

hosts-

The host to IP mapping that will be installed on each node as

/etc/hosts.site.conf: A clone of the site configuration file that holds the global variables that the container creation will use. Values overridden on the command line invocation of containerize.py will be present in this file.

The rest of this directory is primarily of interest to developers. It includes:

annotated.xml- First version of the input topology after default container types have been added. Input to the partitioning step.

assignment- A yaml representation of the partition to virtual node mapping.

backend_config.yaml- The server and channel to use for grandstand communication. Encoded in yaml.

children- Directory containing the assignment, including all the levels of nested hypervisors.

config.tgz-

The contents of the per-experiment directory (except

config.tgz) for distribution into the experiment. embedding.yaml- A yaml-encoded representation of the children sub-directory

ghosts-

Containers that are initially not started in the experiment.

maverick_url: Yaml encoding of the qemu images to be used on each node. openvz_guest_url- Yaml encoding of the openvz templates to be used on each node.

partitioned.xml-

Output of the partitioning process. A copy of

annotated.xmlthat has been decorated with the partitions. phys_topo.ns- The ns2 file used to create the DETER experiment.

phys_topo.xml-

The topdl file used to generate

phystopo.ns. pid_eid-

The DETER project and experiment name under which this topology will be created. Broken out into

/var/containers/pidand/var/containers/eidon virtual nodes inside the topology. route- A directory containing the routing tables for each node

shaping.yaml- Yaml-encoded data about the per-network and per-node loss, delay, and capacity parameters.

switch- A directory containing the VDE switch topology for the experiment.

switch_extra.yaml- Yaml-encoded extra switch configuration information. Mostly VDE switch configuration esoterica.

topo.xml-

The final topology representation from which the physical topology is extracted. Includes the virtual topology as well. This file can be used as input to container_image.py.

traffic_shaping.pickle: Pickled information for configuring endnode traffic shaping. wirefilters.yaml- Specific parameters for configuring the delay elements in VDE switched topologies that implement traffic shaping. See below.

Site Configuration File

The site configuration file controls how all experiments are containerized across DETER. The contents are primarily of interest to developers, but researchers may occasionally find the need to specify their own. The --config parameter to containerize.py does that.

The site configuration file is an attribute value pair file parsed by a python ConfigParser that sets overall container parameters. Many of these have legacy internal names.

The default site configuration is in /share/containers/site.conf on users.isi.deterlab.net.

Acceptable values (and their DETER defaults) are:

- backend_server

-

The IRC server used as a backend coordination service for grandstand. Will be replaced by MAGI. Default:

boss.isi.deterlab.net:6667 - grandstand_port

-

Port that third party applications can contact grandstand on. Will be replaced by MAGI. Default:

4919 - maverick_url

-

Default image used by qemu containers. Default:

http://scratch/benito/pangolinbz.img.bz2 - url_base

-

Base URL of the DETER web interface on which users can see experiments. Default:

http://www.isi.deterlab.net/ - qemu_host_hw

-

Hardware used by containers. Default:

pc2133,bpc2133,MicroCloud - xmlrpc_server

-

Host and port from which to request experiment creation. Default:

boss.isi.deterlab.net:3069 - qemu_host_os

-

OSID to request for qemu container nodess. Default:

Ubuntu1204-64-STD - exec_root

-

Root of the directory tree holding containers software and libraries. Developers often change this. Default:

/share/containers - openvz_host_os

-

OSID to request for openvz nodes. Default

CentOS6-64-openvz - openvz_guest_url

-

Location to load the openvz template from. Default:

%(exec_root)s/images/ubuntu-10.04-x86.tar.gz - switch_shaping

-

True if switched containers (see below) should do traffic shaping in the VDE switch that connects them. Default:

true - switched_containers

-

A list of the containers that are networked with VDE switches. Default:

qemu,process - openvz_template_dir

-

The directory that stores openvz template files. Default:

%(exec_root)s/images/(that is theimagesdirectory in theexec_rootdirectory defined in the site config file.

Container Notes

Different container types have some quirks. This section lists limitations of each container, as well as issues in interconnecting them.

Qemu

Qemu nodes are limited to 7 experimental interfaces. They currently run only Ubuntu 12.04 32 bit operating systems.

ViewOS Processes

These have no way to log in or work as conventional machines. Process tree rooted in the startcommand is created, so a service will run with its own view of the network. It does not have an address on the control net.

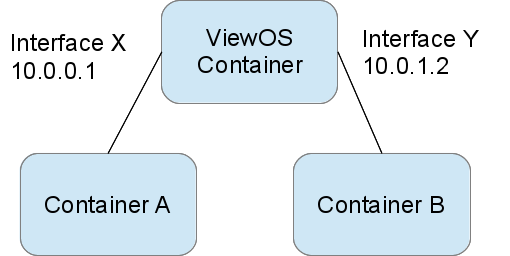

Because of a bug in their internal routing, multi-homed processes do not respond correctly for requests on some interfaces. A ViewOS process does not recognize its other addresses when a packe arrives on a different interface. A picture makes this clearer:

Container A can ping Interface X (10.0.0.1) of the ViewOS container successfully, but if Container A tries to ping Interface Y (10.0.1.2) the ViewOS container will not reply (in fact it will send ARP requests on Interface Y looking for its own address).

ViewOS processes are best used as lightweight forwarders for this reason.

Physical Nodes

Physical nodes can be incorporated into experiments, but should only use modern versions of Ubuntu, to allow the container system to run their start commands correctly and to initialize their routing tables.

Interconnections: VDE switches and local networking

The various containers are interconnected using either local kernel virtual networking or VDE switches. Kernel networking is lower overhead because it does not require process context switching, but VDE switches are a more general solution.

Network behavior changes - loss, delay, rate limits - are introduced into a network of containers using one of two mechanisms: inserting elements into a VDE switch topology or end node traffic shaping. Inserting elements into the VDE switch topology allows the system to modify the behavior for all packets passing through it. Generally this means all packets to or from a host, as the container system inserts these elements in the path between the node and the switch.

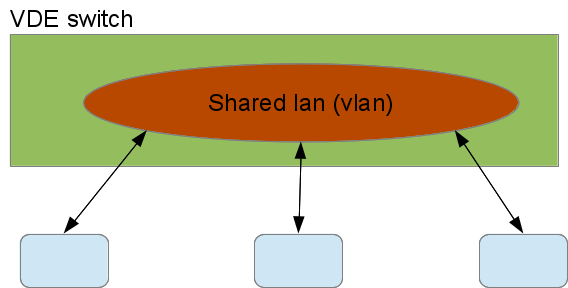

This figure shows 3 containers sharing a virtual LAN (VLAN) on a VDE switch with no traffic shaping:

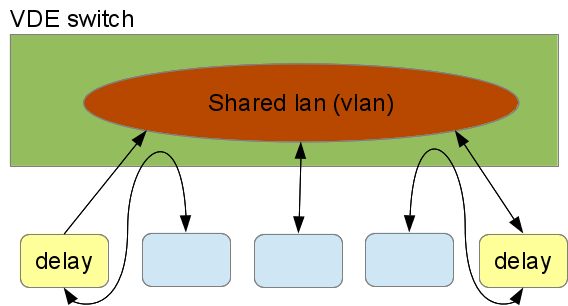

The blue containers connect to the switch and the switch has interconnected their VDE ports into the red shared VLAN. To add delays to tow of the nodes on that VLAN, the following VDE switch configuration would be used:

The VDE switch connects the containers with shaped traffic to the delay elements, not to the shared VLAN. The delay elements are on the VLAN and delay all traffic passing through them. The container system configures the delay elements to delay traffic symmetrically - traffic from the LAN and traffic from teh container are both delayed. The VDE tools can be configured asymmetrically as well. This is a very flexible way to interconnect containers.

That flexibility incurs a cost in overhead. Each delay element and the VDE switch is a process, do traffic passing from one delayed nodes to the other experiences 7 context switches: container -> switch, switch -> delay, delay -> switch, switch -> delay, delay -> switch, and switch -> container.

The alternative mechanism is to do the traffic shaping inside the nodes, using linux traffic shaping. In this case, traffic outbound from a container is delayed in the container for the full transit time to the next hop. The next node does the same. End-node shaping all happens in the kernel so it is relatively inexpensive at run time.

Qemu nodes can make use of either end-node shaping or VDE shaping, and use VDE shaping by default. The --end-node-shaping and --vde-switch-shaping options to containerize.py forces the choice in qemu.

ViewOS processes can only use VDE shaping. Their network stack emulation is not rich enough to include traffic shaping.

Openvz nodes only use end-node traffic shaping. They have no native VDE support so interconnecting openvz containers to VDE switches would include both extra kernel crossings and extra context switches. Because a primary attraction of VDE switches is their efficiency, the containers system does not implement VDE interconnections to openvz.

Similarly embedded physical nodes use only endnode traffic shaping, as routing outgoing traffic through a virtual switch infrastructure that just connects to its physical interfaces is at best confusing.

Unfortunately, endnode traffic shaping and VDE shaping are incompatible. Because end node shaping does not impose delays on arriving traffic, it cannot delay traffic from a VDE delayed node correctly.

This is primarily of academic interest, unless a researcher wants to impose traffic shaping between containers using incompatible traffic shaping. There needs to be an unshaped link between the two kinds of traffic shaping.

Attachments (3)

-

Unshaped.png (26.1 KB) - added by 12 years ago.

Unshaped traffic through a VDE switch

-

shaped.png (35.9 KB) - added by 12 years ago.

Traffic sahping using VDE

-

viewos.png (24.2 KB) - added by 12 years ago.

Viewos ping diagram

Download all attachments as: .zip